By Jeffrey Carl

Boardwatch Magazine was the place to go for Internet Service Provider industry news, opinions and gossip for much of the 1990s. It was founded by the iconoclastic and opinionated Jack Rickard in the commercial Internet’s early days, and by the time I joined it had a niche following but an influential among ISPs, particularly for its annual ranking of Tier 1 ISPs and through the ISPcon tradeshow. Writing and speaking for Boardwatch was one of my fondest memories of the first dot-com age.

Dig through any Internet engineer’s “toolkit” of favorite utilities, and you’ll find (probably right under the empty pizza boxes) the traceroute program. Users have now joined the bandwagon, using traceroute to find out why they can’t get to site A or what link is killing their throughput to site B.

Traceroute uses already-stored information in a packet header for queries on each part of the path to a specified host. With it, you can find out how you get to a site, why it’s failing or slow, and what might be causing the problem. Traceroute seems like a simple and perfect tool, but it can sometimes give misleading answers due to the complexities of Internet routing. While it should never be relied on to give the complete answer to any question about paths, peering or network problems, it is a very good place to start.

Traceroute, in its most basic form, allows you to print out a list of all the intermediate routers between two destinations on the Internet. It allows you to diagram a path through the network. More important to IP network administrators, however, is traceroute’s potential as a powerful tool for diagnosing breakdowns between your network and the outside world, or perhaps even within the network itself.

The Internet is vast and not all service providers are willing to talk to one another. As a result, your connection to your favorite web or FTP site is often grudgingly left to the hands (or fiber) of a middleman, perhaps your upstream, or a peer of theirs, or even more remote than that. When there is performance trouble or even a total failure in reaching that site, you might be left scratching your head, trying to determine who is at fault once you’ve determined it’s not a fault within your control.

The traceroute utility is a probe that will enable you to better determine where the breakdown begins on that path. Once you have some experience with the program, you’ll be able to see when performance trouble is likely a case of oversaturation of a network along the way, or that your target is simply hosted behind a chain of too many different providers. You will be able to see when your upstream has likely made a mistake routing your requests out to the world, and be able to place one call to their NOC; a call that would resolve the situation much more quickly than scratching your head and anxiously phoning your sales representative.

Performing a Traceroute

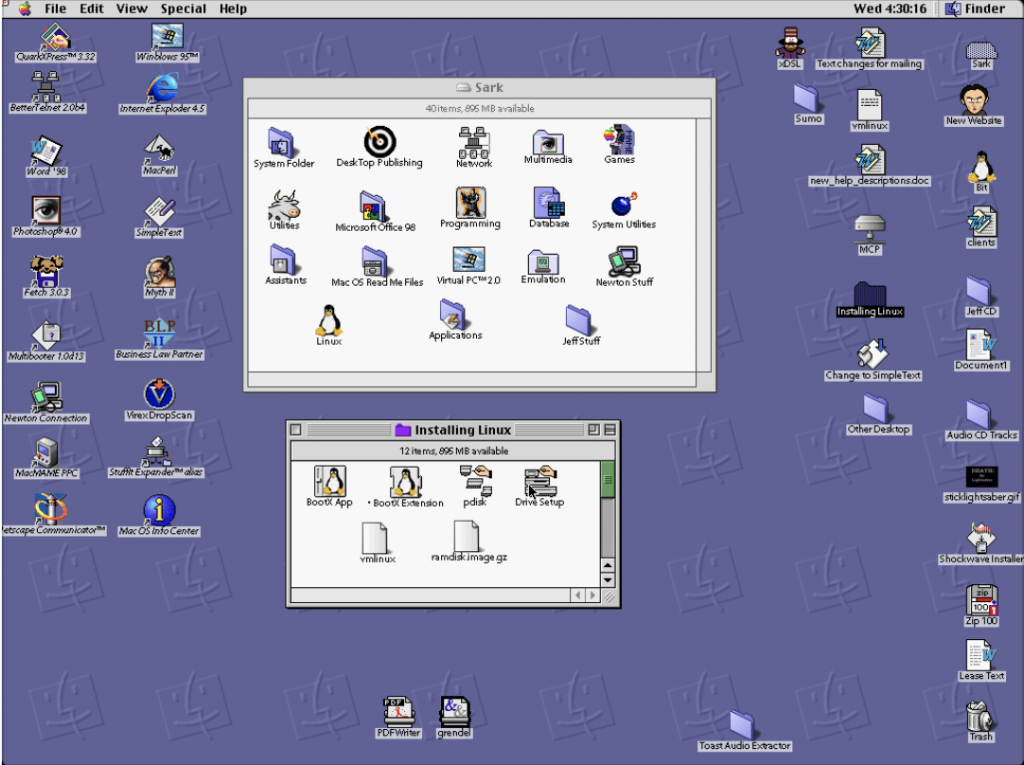

Initiating a traceroute is a very simple procedure (although interpreting them is not). Traceroutes can be done from any Unix or Windows computer on which you have an account; MacOS users will need to download the shareware program IP Net Monitor, available from shareware sites or at There are also numerous traceroute gateways around the Internet which can be accessed via the web.

From a Unix shell account, you can usually just type traceroute at the prompt, followed by any of the Unix traceroute options, followed by the host or IP you’re attempting to trace to. If you receive a “command not found” message, it indicates either that traceroute isn’t installed on the computer (very unlikely), or it’s simply installed in a location which isn’t in your command path. To fix this, you may need to edit your path or specify the absolute location of the program – on many systems, it’s at /usr/sbin/traceroute or /sbin/traceroute.

Windows users with an active Internet connection can drop to a DOS prompt and type tracert followed by the hostname or IP address they want to trace to. With the Unix and DOS traceroute commands, you can use any of a number of command-line options to customize the report that the trace will give back to you. With web-based traceroute gateways, you may be able to specify which options you want, or a default set of options will be preselected

How it Works

As the Unix man page for traceroute says, “The Internet is a large and complex aggregation of network hardware, connected together by gateways. Tracking the route your packets follow (or finding the miscreant gateway that’s discarding your packets) can be difficult. Traceroute utilizes the IP protocol “time to live” field and attempts to elicit an ICMP TIME_EXCEEDED response from each gateway along the path to some host.”

Traceroutes go from hop to hop, showing the path taken to site A from site B. Using the 8-bit TTL (“Time To Live”) in every packet header, traceroute tries to see the latency from each hop, printing the DNS reverse lookup as it goes (or showing the IP address if there is no name).

Traceroute works by sending a UDP (User Datagram Protocol) packet to a high-numbered port (which would be unlikely to be in use by another service), with the TTL set to a low value (initially 1). This gets partway to the destination and then the TTL expires, which provokes (if all goes as planned) an ICMP_TIME_EXCEEDED message from the router at which the TTL expires. This signal is what traceroute listens for.

After sending out a few of these (usually three) and seeing what returns, traceroute then sends out similar packets with a TTL of 2. These get two routers down the road before generating ICMP_TIME_EXCEEDED packets. The TTL is increased until either some maximum (typically 30) is reached, or it hits a snag and reports back an error.

The only mandatory parameter is the destination host name or IP number. The default probe datagram length is 38 bytes, but this may be increased by specifying a packet size (in bytes) after the destination host name.

What it Means

What traceroute tells you (assuming everything works) is how packets from you get to another specific destination, and how long they take to get there. Armed with a little knowledge about the way the Internet works, you can then make informed guesses about a number of things.

Getting There is Half the Fun

Let’s say that you’d like to know how traffic from my website is reaching the network of Sites On Line, a large online service. So, I run a traceroute to their network from my webserver.

traceroute to www.sol.com (127.188.146.18), 30 hops max, 40 byte packets

1 epsilon3.myisp.net (127.50.252.2) 1 ms 1 ms 1 ms

2 sc-mc-4-0-A-OC3.myisp.net (127.50.254.50) 1 ms 1 ms 1 ms

3 sol-hn-1-0-H-T3.myisp.net (127.50.254.58) 2 ms 2 ms 2 ms

4 gpopr-rre2-P2-2.sol.com (127.163.134.61) 2 ms 1 ms 2 ms

5 127.168.0.30 (127.168.0.30) 3 ms 3 ms 3 ms

6 www-dr4.rri.sol.com (127.188.128.254) 4 ms 7ms 8 ms

You can see from this example that your traffic passes through a router for my ISP, then passes through what is evidently an OC-3, before entering a line that (judging by the name) is evidently a DS-3 gateway between my ISP and SOL. From there, it enters the GigaPop of SOL, passes through a router which doesn’t have a reverse lookup (I see only its IP address), and eventually to one last router (clearly marked as leading to its webserver) at SOL.

Because of the way routing works, an ISP can really only control its inter-network traffic as far as choosing what to listen to from each peer or upstream, and then deciding which of those same contact points gets its outbound packets. So when you’re tracerouting from your network to another network, you’re getting a glimpse of how your neighbor network is announcing itself to the rest of the ‘Net. Because of the existence of this “asymmetric routing” in some circumstances, you should probably do two traces (one in each direction) between each two points you’re interested in.

Reading the T3 Leaves

While you can easily read the names that appear in the traceroute, interpreting them is a hazy enterprise. If you’ve got a pretty good feel for the topology of the Internet, the reverse lookups on your traceroute can (possibly) tell you a lot about the networks you’re passing through. Geographic details, line types and even bandwidth may be hinted at in the names – but hints are really all that one can expect. Since every large network names its routers and gateways differently, you can assume some things about them, but you can’t be sure. If you want to engage in router-spotting, note that common names may reflect:

• locations (a mae in the name might indicate a MAE connection, or la might indicate Los Angeles)

• line type (atm may indicate an ATM circuit as opposed to a clear-channel line)

• bandwidth (T3 or 45 is generally a dead giveaway, for example)

• a gateway (sometimes flagged as gw) to a customer’s network (sometimes referred to as cust or something similar)

• positions within the network (some lines may be named with core or border, or something similar)

• engineering senses of humor (as seen by the reference to Babylon 5 in my ISP’s network)

• The network whose router it is (almost always identifiable by the domain name; if the router doesn’t have a reverse lookup, you can perform a nslookup on an IP address to find out whose IP space it is in).

However, it should be reiterated here that amateur router-ology is a dangerous sport, since really the only people who understand a router’s name are the people that named it. So don’t get too upset when you think you’ve spotted someone routing your traffic through Nome, Alaska when it fact it was named by a Hobbit-obsessed engineer with bad spelling.

Some Clues About Connectivity

A prospective ISP, prospectiveisp.net, tells you that it is fully peered. Is there any way that you can check up on this? Well, yes and no.

Traceroute can tell you whether two networks communicate directly, or through a third party. First, you traceroute from a traceroute page behind hugeisp.net to a location within prospectiveisp.net.

traceroute to www.prospectiveisp.net (127.50.225.13), 30 hops max, 40 byte packets

1 s8-3.oakland-cr2.hugeisp.net (127.0.68.77) 12 ms 17 ms 8 ms

2 h2-0-0.paloalto-br1.hugeisp.net (127.0.1.61) 15 ms 32 ms 12 ms

3 sl-bb10-sj-9-0.intermediary.net (127.232.3.25) 30 ms 15 ms 13 ms

4 sl-gw11-dc-8-0-0.intermediary.net (127.232.7.198) 82 ms 103 ms 73 ms

5 sl-prospective-1-0-0-T3.intermediary.net (127.228.220.14) 77 ms 74 ms 73 ms

6 border1-fddi.charlottesville.prospectiveisp.net (127.152.42.1) 121 ms 76 ms 75 ms

7 ns.prospectiveisp.net (127.50.225.13) 80 ms 79 ms 94 ms

It is evident from this traceroute that hugeisp.net and prospectiveisp.net travel through a third party to reach each other. While this doesn’t say anything definite about their relationship, two networks will generally pass their traffic directly to each other about if they are peers (barring strange routing circumstances or other arrangements). This doesn’t paint a full picture (and you should confirm this with a trace from prospectiveisp to hugeisp), but it leads you not to think that prospectiveisp’s claims of full peering are true.

Note that while traceroute can tell you whether two networks communicate directly or indirectly, it can’t tell you any more about their relationship. Even if any two networks do communicate directly, traceroute can’t tell me whether their relationship is provider-customer or NAP peering (except perhaps through whatever hazy clues you obtain from router names or by calling a psychic hotline and reading them my trace).

In the above example, you might conclude that prospectiveisp buys transit or service from intermediary.net, which peers with (or buys service from) hugeisp.net. Of course, the opposite may be true – that prospectiveisp.net peers with intermediary.net, and hugeisp.net buys service from intermediary.net. However, common sense and a rough feel for the “pecking order” of first- and second-tier networks should guide your guesses here.

Where’s the Traffic Jam?

Let’s say that you encounter some difficulty reaching the Somesite.Com website (you describe the site’s download speed as “glacial”), and decide to show off your newfound traceroute skills to investigate the cause of the problem. Here’s what you find:

traceroute to somesite.com (127.8.29.15), 30 hops max, 40 byte packets

1 epsilon3.yourisp.net (127.50.252.2) 1 ms 0 ms 1 ms

2 other-FDDI.yourisp.net (127.50.254.46) 2 ms 2 ms 2 ms

3 br2.tco1.huge.net (127.41.177.249) 6 ms 4 ms 22 ms

4 112.ATM2-0.XR2.HUGE.NET (127.188.160.94) 8 ms 28 ms 30 ms

5 192.ATM9-0-0.GW3.HUGE.NET (127.188.161.125) 8 ms 32 ms 28 ms

6 * * *

7 * somesite-gw.customer.HUGE.NET (127.130.32.234) 12 ms !A *

From this, you can guess (with a high degree of certainty) that the initial source of trouble is outside of our your ISP’s network and somewhere along the route used by the site’s carrier, huge.net. Hop six shows some type of trouble, with none of the three packets sent to that gateway returning. Notice the traceroute continues on to the next stop despite the failure at hop six. The problem there was also likely for the loss of two of the packets sent to hop seven. Thankfully, one managed to get back and indicated the trace was complete.

Using the example above, you could ping the address reflected in hop seven and then compare it to a ping to, say, hop four or five, and see if loss of packets to hop seven versus hop five reflects what the traceroute indicated.

Selected Traceroute Error Messages:

• !H Host Unreachable

This frequently happens when the site or server is down.

• !N Network Unreachable

This can be caused by networks being down, or routers unable to transmit known routes to a network.

• !P Protocol Unreachable

This only happens when a router fails to carry a protocol that is used in the packet, like IPX.

• !S Source Route Failed

This can only happen if you are using the source route functions of traceroute, (i.e.) tracing from a remote site to another remote site. The site you are using source route tracing from must have source route tracing turned on, or it will not work.

• * TTL Time Exceeded

This is caused when the path back exceeds the TTL time limit, or the router sends a ICMP Time Exceeded message to site A.

• !TTL is <=1

This happens any time the TTL is changed, via RIP or some other protocol, to a new TTL.

Caveat Tracerouter

Before you make too many decisions based on the results of traceroutes, you should be very aware that tracerouting is a complex phenomenon, and that plenty of otherwise innocuous things can interfere with it. For example, it may be possible to ping a site, but not to traceroute to it. This is because many routers are capable of being set to drop time exceeded packets, but not echo reply packets.

Traceroutes may return an unknown host response, but this frequently does not mean that the sites are down, or the network connection in between is faulty. Some domains are simply not mapped to be used without a third-level domain as part of the name. For example, tracerouting to aol.com will not work; but tracing to www.aol.com will.

In short, tracerouting is a valuable tool, but does not give a complete picture of a network’s status. Try to use as many gauges of network status as possible when attempting to debug an Internet connection.

Other Traceroute Resources:

http://boardwatch.internet.com/mag/96/dec/bwm38.html

A lengthy tutorial on Traceroute by Jack Rickard.

Traceroute.org directory of Traceroute Gateways

http://www.tracert.com/cgi-bin/trace.pl

The Multiple Simultaneous Traceroute Gateway

http://boardwatch.internet.com/traceroute.html

Boardwatch’s own list of traceroute servers

A handy site that allows you to trace from Digex’s network at MAE-East, MAE-West, Sprint NAP or PAIX.

http://www.sustworks.com/products/product_ipnm.html.

Home Page for the program IP Net Monitor for MacOS